2D GPU displacement mapping

Overview

This document attempts to describe 2D displacement mapping. This

technique has a wide variety of uses, as will soon become

obvious. The reader is not expected to have any real knowledge

of writing OpenGL shaders but the details of creating/loading

shaders, creating an OpenGL context, etc, are out of the scope

of this document.

Displacement is essentially about affecting the position of

a thing using another thing, as shocking as that may seem. In

less silly terms, displacement mapping is about affecting the

position of parts of an object (vertices, pixels) by using

values obtained from a displacement map (usually a texture in

current graphics systems).

Examples

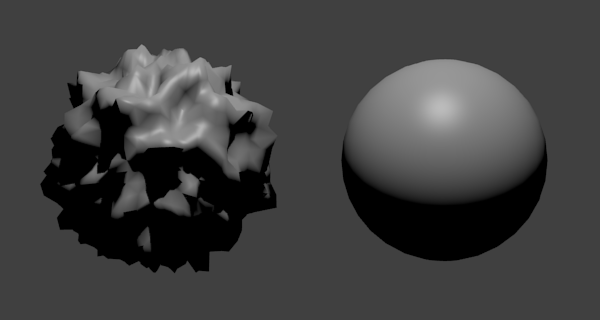

3D vertex displacement

3D vertex displacement is used by artists to produce extremely

complex models using only simple meshes and textures.

In the above image, each vertex is translated along its own

normal vector by a value K, where

K is obtained by:

- Look up the pixel P at coordinates (s, t) in an associated greyscale texture.

- Interpret P as a value in the range -0.5 .. 0.5 inclusive.

- (Optionally) Multiply P by some configurable maximum M.

The actual (s, t)

coordinates used can be picked at random or, more commonly, can simply be the

texture coordinates associated with each vertex in the mesh as is normal

with any textured model. Higher values of

M result in more pronounced

displacement.

The displacement map/texture used in the depicted example is

a randomly generated greyscale noise texture.

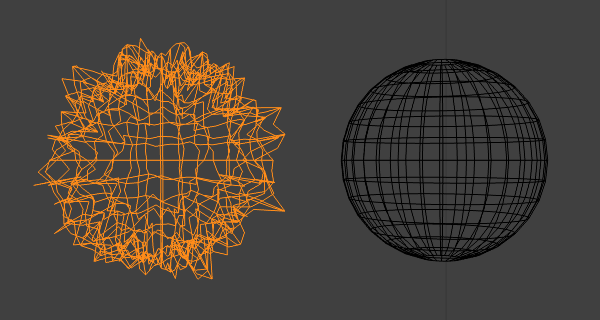

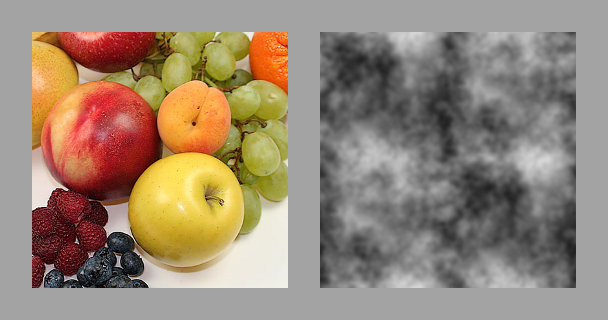

2D pixel displacement

2D pixel displacement can be used to produce complex procedural

textures from simple components. A rippling "liquid" effect can

be produced by displacing each pixel in a texture by another

generated texture:

In the above image, each pixel is translated up and left by

K pixels where

K is obtained by:

- Look up the pixel P at coordinates (s, t) in an associated greyscale texture.

- Interpret P as a value in the range 0.0 .. 1.0 inclusive.

- Multiply P by 20.

The actual (s, t)

coordinates used are the

(x, y)

coordinates of the corresponding pixel in the image being displaced.

Displacement, then, isn't the act of modifying pixels, but the act of

modifying the coordinates that would have been used to select pixels.

Procedural textures

Modern OpenGL implementations allow for rendering to textures via

the use of framebuffers. The programmer allocates space for an

RGBA texture, creates a "framebuffer object" and then attaches

the allocated texture to the framebuffer object as storage space for

the color buffer. The programmer then "binds" the framebuffer object

and performs rendering commands as normal. The output produced by

the commands is written to the texture as opposed to the screen.

This texture can then be used in subsequent rendering commands

like any other texture.

The rest of this document consists of examples of combining 2D

displacement mapping and render-to-texture techniques

to produce a range of computationally inexpensive and deceptively

complex-looking effects.

Examples are given in the Java programming language for the sake

of keeping code platform independent, but no Java-specific features

are used and programs should be easily understood by programmers of

other imperative languages (C, C++, Ada, etc). The OpenGL library used

is LWJGL.

The example code uses OpenGL 3.0 but does not use anything that is not

present in OpenGL 2.1 other than framebuffer objects. Porting this

code to OpenGL 2.1 is simple: see the

ARB_framebuffer_object

and/or

EXT_framebuffer_object

extensions. The shaders used are GLSL 1.1 compatible. For the sake of

keeping the code short and simple and to avoid depending on external

libraries for what should be short tutorial code, the example programs use

the immediate mode

glBegin()/glEnd() functions to

specify vertices and also the traditional OpenGL matrix stack.

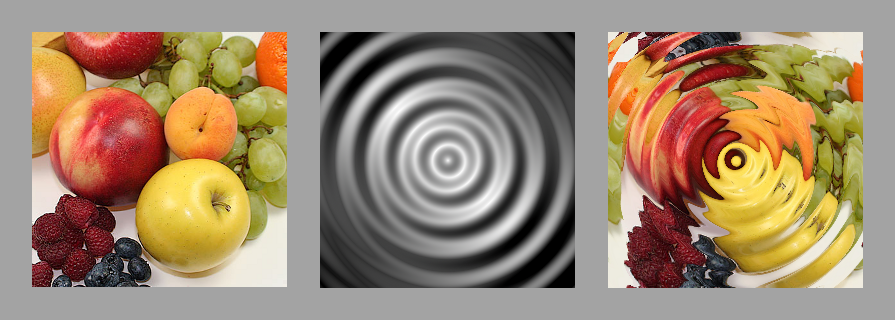

Ripple

Examples

The Ripple

program takes two images as input: an image to be displaced

and an image to be used as a displacement map, and displays

a textured polygon with an animated "rippling" texture. The

texture is animated by modifying the texture coordinates for

the displacement map over time. It is possible to obtain a

huge range of interesting effects just by using different

displacement maps.

Program

The program depends on a set of very simple shaders. The

shader_uv.v

and shader_uv.f

shaders do trivial vertex transformation and texturing. These programs

are completely standard fare and only implement the bare

minimum necessary to draw textured polygons.

The main displacement work takes place in

shader_displace.f:

#version 110

varying vec2 vertex_uv;

uniform sampler2D texture;

uniform sampler2D displace_map;

uniform float maximum;

uniform float time;

void

main (void)

{

float time_e = time * 0.001;

vec2 uv_t = vec2(vertex_uv.s + time_e, vertex_uv.t + time_e);

vec4 displace = texture2D(displace_map, uv_t);

float displace_k = displace.g * maximum;

vec2 uv_displaced = vec2(vertex_uv.x + displace_k,

vertex_uv.y + displace_k);

gl_FragColor = texture2D(texture, uv_displaced);

}

The program takes the current texture coordinates (interpolated by

the current vertex shader), a texture, a displacement map, a

scaling value (maximum), and

the current time (in frames, but the time unit is not important).

First, the current texture coordinates vertex_uv

are translated by the current scaled time value

time_e. Then, a pixel is

read from the displacement map at the resulting texture coordinates.

The displacement map is assumed to be greyscale. Pixels are represented

as four element RGBA vectors with floating point components. The shader

reads the green channel of the pixel (but would of course get identical

results reading from either the red or blue channels with a greyscale image),

and then scales this value by maximum to

obtain a final offset value displace_k.

Note that this value is in texture-space units, not pixels - in a

256x256 pixel

image, a value of 0.25 would represent

64 pixels. The program then

adds displace_k to the original

interpolated texture coordinates and then retrieves a pixel from the

current texture using the coordinates.

The OpenGL program that drives the shaders is similarly simple. First,

the program allocates a framebuffer and adds a blank texture as color

buffer storage. It loads the requested image and displacement map

image, and also compiles and loads the relevant shading programs. These

uninteresting but essential functions are implemented in the

Utilities

class.

Ripple(

final String image,

final String displace)

throws IOException

{

this.texture_image = Utilities.loadTexture(image);

this.texture_displacement_map = Utilities.loadTexture(displace);

this.framebuffer_texture =

Utilities.createEmptyTexture(

Ripple.TEXTURE_WIDTH,

Ripple.TEXTURE_HEIGHT);

this.framebuffer = Utilities.createFramebuffer(this.framebuffer_texture);

this.shader_uv = Utilities.createShader("dist/shader_uv.v", "dist/shader_uv.f");

this.shader_displace =

Utilities.createShader("dist/shader_uv.v", "dist/shader_displace.f");

}

Rendering involves two steps. First, the program needs to generate

a texture based on the loaded image and displacement map. It does this

by binding the allocated framebuffer and then rendering a fullscreen

textured quad using the previously mentioned

displacement shader.

private void renderToTexture()

{

GL11.glMatrixMode(GL11.GL_PROJECTION);

GL11.glLoadIdentity();

GL11.glOrtho(0, 1, 0, 1, 1, 100);

GL11.glMatrixMode(GL11.GL_MODELVIEW);

GL11.glLoadIdentity();

GL11.glTranslated(0, 0, -1);

GL11.glViewport(0, 0, Ripple.TEXTURE_WIDTH, Ripple.TEXTURE_HEIGHT);

GL11.glClearColor(0.25f, 0.25f, 0.25f, 1.0f);

GL11.glClear(GL11.GL_COLOR_BUFFER_BIT);

GL30.glBindFramebuffer(GL30.GL_FRAMEBUFFER, this.framebuffer);

{

GL13.glActiveTexture(GL13.GL_TEXTURE0);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, this.texture_image);

GL13.glActiveTexture(GL13.GL_TEXTURE1);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, this.texture_displacement_map);

GL20.glUseProgram(this.shader_displace);

{

final int ut = GL20.glGetUniformLocation(this.shader_displace, "texture");

final int udm = GL20.glGetUniformLocation(this.shader_displace, "displace_map");

final int umax = GL20.glGetUniformLocation(this.shader_displace, "maximum");

final int utime = GL20.glGetUniformLocation(this.shader_displace, "time");

GL20.glUniform1i(ut, 0);

GL20.glUniform1i(udm, 1);

GL20.glUniform1f(umax, 0.2f);

GL20.glUniform1f(utime, this.time);

Utilities.checkGL();

GL11.glBegin(GL11.GL_QUADS);

{

GL11.glTexCoord2f(0, 1);

GL11.glVertex3d(0, 1, 0);

GL11.glTexCoord2f(0, 0);

GL11.glVertex3d(0, 0, 0);

GL11.glTexCoord2f(1, 0);

GL11.glVertex3d(1, 0, 0);

GL11.glTexCoord2f(1, 1);

GL11.glVertex3d(1, 1, 0);

}

GL11.glEnd();

}

GL20.glUseProgram(0);

GL13.glActiveTexture(GL13.GL_TEXTURE0);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, 0);

GL13.glActiveTexture(GL13.GL_TEXTURE1);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, 0);

}

GL30.glBindFramebuffer(GL30.GL_FRAMEBUFFER, 0);

Utilities.checkGL();

}

After the above function has executed,

framebuffer_texture

contains the "displaced" texture. The program then draws

a textured quad to the screen:

private void renderScene()

{

GL11.glMatrixMode(GL11.GL_PROJECTION);

GL11.glLoadIdentity();

GL11.glFrustum(-1, 1, -1, 1, 1, 100);

GL11.glMatrixMode(GL11.GL_MODELVIEW);

GL11.glLoadIdentity();

GL11.glTranslated(0, 0, -1.25);

GL11.glRotated(30, 0, 0, 1);

GL11.glViewport(0, 0, Ripple.SCREEN_WIDTH, Ripple.SCREEN_HEIGHT);

GL11.glClearColor(0.25f, 0.25f, 0.25f, 1.0f);

GL11.glClear(GL11.GL_COLOR_BUFFER_BIT);

GL20.glUseProgram(this.shader_uv);

{

GL13.glActiveTexture(GL13.GL_TEXTURE0);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, this.framebuffer_texture);

Utilities.checkGL();

final int ut = GL20.glGetUniformLocation(this.shader_uv, "texture");

GL20.glUniform1i(ut, 0);

Utilities.checkGL();

GL11.glBegin(GL11.GL_QUADS);

{

GL11.glTexCoord2f(0, 0);

GL11.glVertex3d(-0.75, 0.75, 0);

GL11.glTexCoord2f(0, 1);

GL11.glVertex3d(-0.75, -0.75, 0);

GL11.glTexCoord2f(1, 1);

GL11.glVertex3d(0.75, -0.75, 0);

GL11.glTexCoord2f(1, 0);

GL11.glVertex3d(0.75, 0.75, 0);

}

GL11.glEnd();

}

GL20.glUseProgram(0);

Utilities.checkGL();

}

It is, of course, possible to render directly to the screen using

the displacement shader. The program described here avoids doing that

in order to demonstrate that the resulting procedural texture is an

ordinary texture that can be used in the same manner as any other.

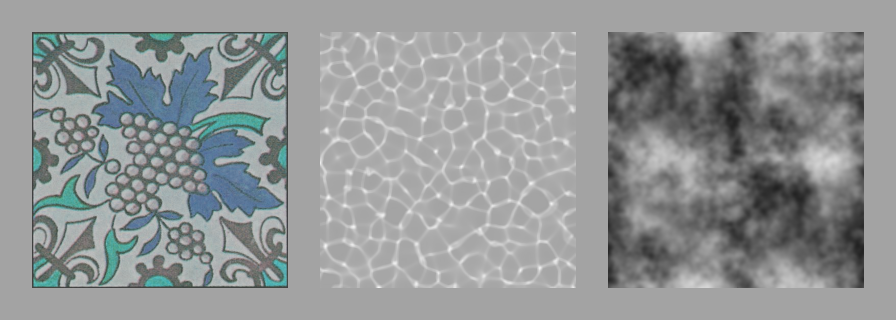

Caustics

Examples

A simple extension of the Ripple

program with multitexturing and blending, results in the

Caustics

program. The program works almost identically to

Ripple,

but takes an extra texture as input. One of the textures is modified

through displacement mapping as before and then layered over the top

with trivial alpha compositing. When fed the image of ceramic tiles,

a transparent "caustics" texture, and a cloudlike displacement map,

the program gives a believable approximation of the effect of sunlight

shining through water onto the bottom of a swimming pool.

Program

Most of the program's source code is the same as before. The

program loads all necessary textures and shaders, and creates

a framebuffer. The only significant differences are the extra

texture and the use of the

multitexturing shader

that simply blends and applies two incoming textures.

Caustics(

final String underlay,

final String overlay,

final String displace)

throws IOException

{

this.texture_underlay = Utilities.loadTexture(underlay);

this.texture_overlay = Utilities.loadTexture(overlay);

this.texture_displacement_map = Utilities.loadTexture(displace);

this.framebuffer_texture =

Utilities.createEmptyTexture(

Caustics.TEXTURE_WIDTH,

Caustics.TEXTURE_HEIGHT);

this.framebuffer = Utilities.createFramebuffer(this.framebuffer_texture);

this.shader_uv =

Utilities.createShader("dist/shader_uv.v", "dist/shader_multi_uv.f");

this.shader_displace =

Utilities.createShader("dist/shader_uv.v", "dist/shader_displace.f");

}

Rendering to a texture happens exactly as before:

private void renderToTexture()

{

GL11.glMatrixMode(GL11.GL_PROJECTION);

GL11.glLoadIdentity();

GL11.glOrtho(0, 1, 0, 1, 1, 100);

GL11.glMatrixMode(GL11.GL_MODELVIEW);

GL11.glLoadIdentity();

GL11.glTranslated(0, 0, -1);

GL11.glViewport(0, 0, Caustics.TEXTURE_WIDTH, Caustics.TEXTURE_HEIGHT);

GL11.glClearColor(0.25f, 0.25f, 0.25f, 1.0f);

GL11.glClear(GL11.GL_COLOR_BUFFER_BIT);

GL30.glBindFramebuffer(GL30.GL_FRAMEBUFFER, this.framebuffer);

{

GL13.glActiveTexture(GL13.GL_TEXTURE0);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, this.texture_overlay);

GL13.glActiveTexture(GL13.GL_TEXTURE1);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, this.texture_displacement_map);

GL20.glUseProgram(this.shader_displace);

{

final int ut =

GL20.glGetUniformLocation(this.shader_displace, "texture");

final int udm =

GL20.glGetUniformLocation(this.shader_displace, "displace_map");

final int umax =

GL20.glGetUniformLocation(this.shader_displace, "maximum");

final int utime =

GL20.glGetUniformLocation(this.shader_displace, "time");

GL20.glUniform1i(ut, 0);

GL20.glUniform1i(udm, 1);

GL20.glUniform1f(umax, 0.1f);

GL20.glUniform1f(utime, this.time);

Utilities.checkGL();

GL11.glBegin(GL11.GL_QUADS);

{

GL11.glTexCoord2f(0, 1);

GL11.glVertex3d(0, 1, 0);

GL11.glTexCoord2f(0, 0);

GL11.glVertex3d(0, 0, 0);

GL11.glTexCoord2f(1, 0);

GL11.glVertex3d(1, 0, 0);

GL11.glTexCoord2f(1, 1);

GL11.glVertex3d(1, 1, 0);

}

GL11.glEnd();

}

GL20.glUseProgram(0);

GL13.glActiveTexture(GL13.GL_TEXTURE0);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, 0);

GL13.glActiveTexture(GL13.GL_TEXTURE1);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, 0);

}

GL30.glBindFramebuffer(GL30.GL_FRAMEBUFFER, 0);

Utilities.checkGL();

}

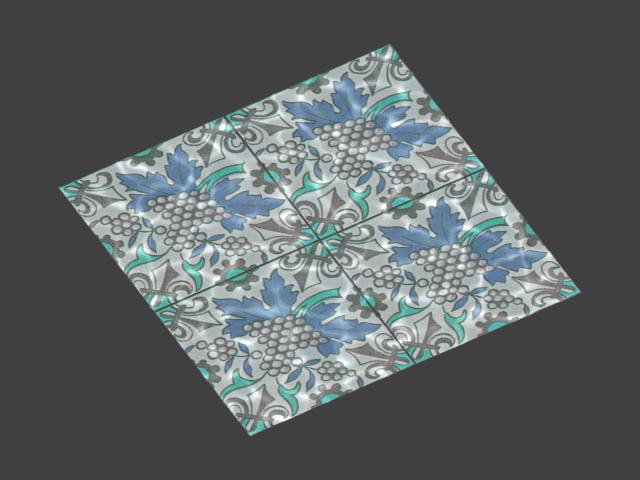

Now, framebuffer_texture contains

a transparent displaced texture. Rendering the scene now only differs

in that the texturing shader applies two textures to polygons instead

of just the one. The shader takes extra parameters controlling how

the textures are to be scaled, and a parameter that controls the

degree of alpha blending. In effect, the "tile" texture scaled by 50%

and applied to the textured quad, and then the transparent "caustics"

texture is applied over the top at 40% opacity.

private void renderScene()

{

GL11.glMatrixMode(GL11.GL_PROJECTION);

GL11.glLoadIdentity();

GL11.glFrustum(-1, 1, -1, 1, 1, 100);

GL11.glMatrixMode(GL11.GL_MODELVIEW);

GL11.glLoadIdentity();

GL11.glTranslated(0, 0, -1.25);

GL11.glRotated(30, 0, 0, 1);

GL11.glViewport(0, 0, Caustics.SCREEN_WIDTH, Caustics.SCREEN_HEIGHT);

GL11.glClearColor(0.25f, 0.25f, 0.25f, 1.0f);

GL11.glClear(GL11.GL_COLOR_BUFFER_BIT);

GL20.glUseProgram(this.shader_uv);

{

GL13.glActiveTexture(GL13.GL_TEXTURE0);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, this.texture_underlay);

GL13.glActiveTexture(GL13.GL_TEXTURE1);

GL11.glBindTexture(GL11.GL_TEXTURE_2D, this.framebuffer_texture);

Utilities.checkGL();

final int ut0 = GL20.glGetUniformLocation(this.shader_uv, "texture0");

GL20.glUniform1i(ut0, 0);

final int ut1 = GL20.glGetUniformLocation(this.shader_uv, "texture1");

GL20.glUniform1i(ut1, 1);

final int um = GL20.glGetUniformLocation(this.shader_uv, "mix");

GL20.glUniform1f(um, 0.5f);

final int us0 = GL20.glGetUniformLocation(this.shader_uv, "scale0");

GL20.glUniform1f(us0, 2.0f);

final int us1 = GL20.glGetUniformLocation(this.shader_uv, "scale1");

GL20.glUniform1f(us1, 0.8f);

Utilities.checkGL();

GL11.glBegin(GL11.GL_QUADS);

{

GL11.glTexCoord2f(0, 0);

GL11.glVertex3d(-0.75, 0.75, 0);

GL11.glTexCoord2f(0, 1);

GL11.glVertex3d(-0.75, -0.75, 0);

GL11.glTexCoord2f(1, 1);

GL11.glVertex3d(0.75, -0.75, 0);

GL11.glTexCoord2f(1, 0);

GL11.glVertex3d(0.75, 0.75, 0);

}

GL11.glEnd();

}

GL20.glUseProgram(0);

Utilities.checkGL();

}

Lists

- 2.1.1. 3D vertex displacement

- 2.1.2. 3D vertex displacement (wireframe)

- 2.2.1. 2D pixel displacement

- 4.1.1. Ripple "concentric" input

- 4.1.2. Ripple "concentric" output

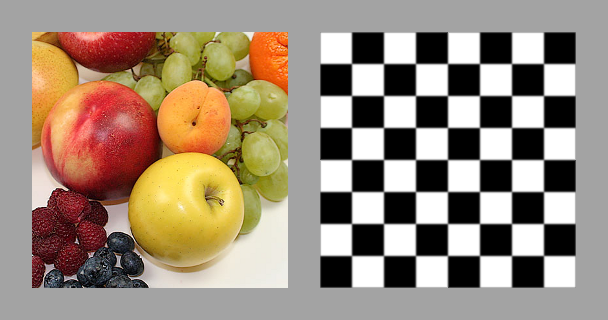

- 4.1.3. Ripple "chess" input

- 4.1.4. Ripple "chess" output

- 4.1.5. Ripple "clouds" input

- 4.1.6. Ripple "clouds" output

- 5.1.1. Caustics input

- 5.1.2. Caustics output