Glow maps

Overview

This document attempts to describe so-called glow

maps. An extremely simple technique used in various 3D games (notably

Deus Ex: Human Revolution)

to provide dramatic and atmospheric lighting effects.

Blender implementation

Initial setup

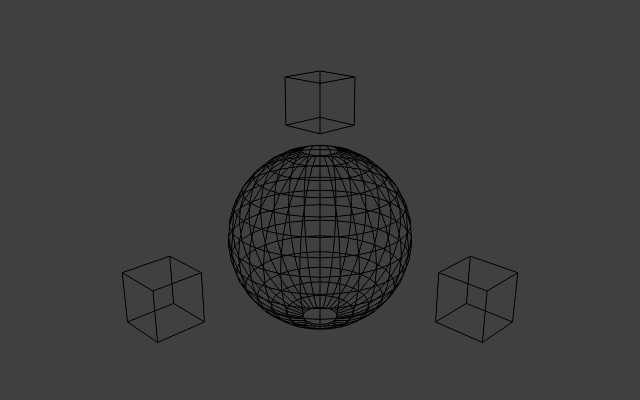

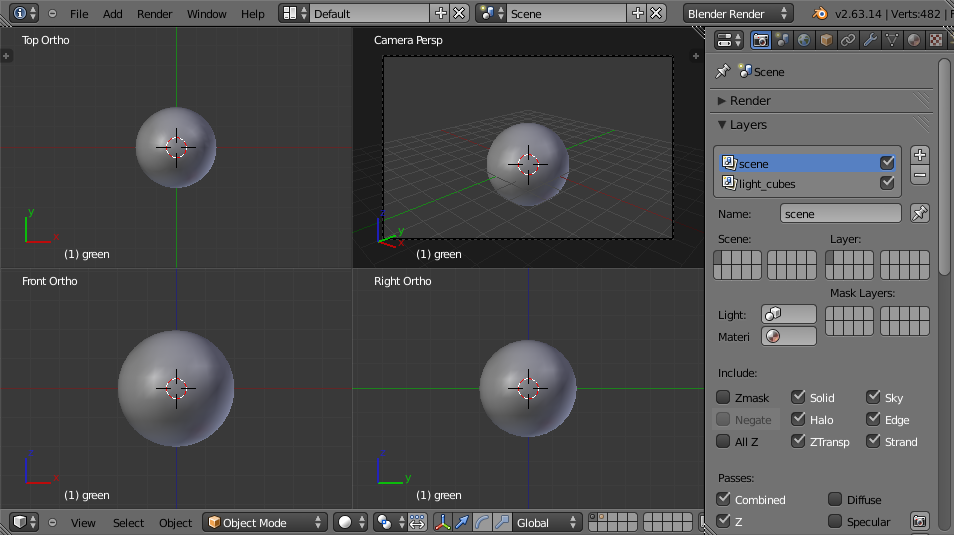

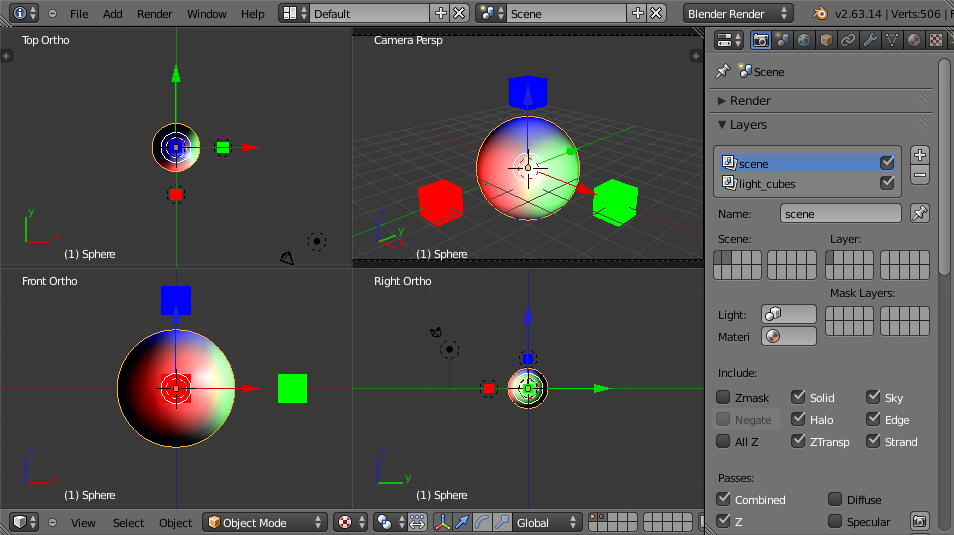

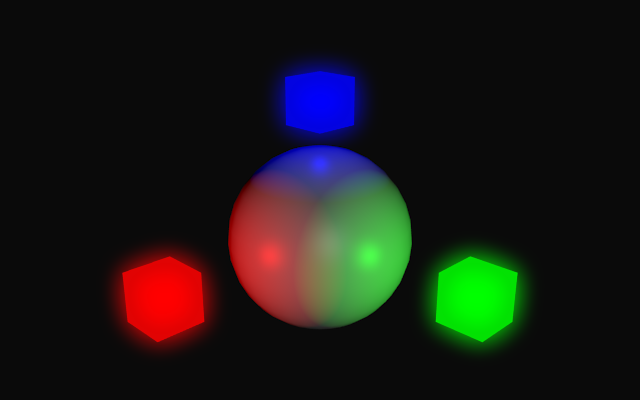

First a simple scene is created consisting of a sphere and three cubes.

The sphere represents an arbitrary solid object, and the three small cubes

represent lights.

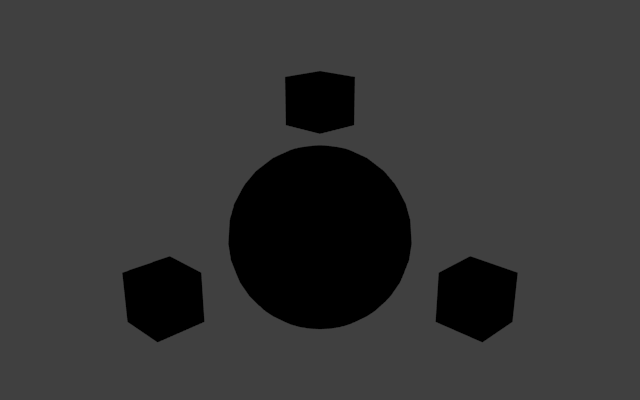

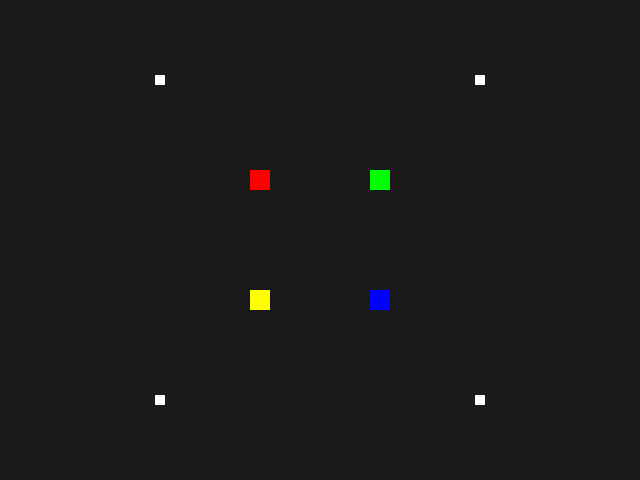

Naturally, without any real lights in the scene, the rendered scene is

unimpressive:

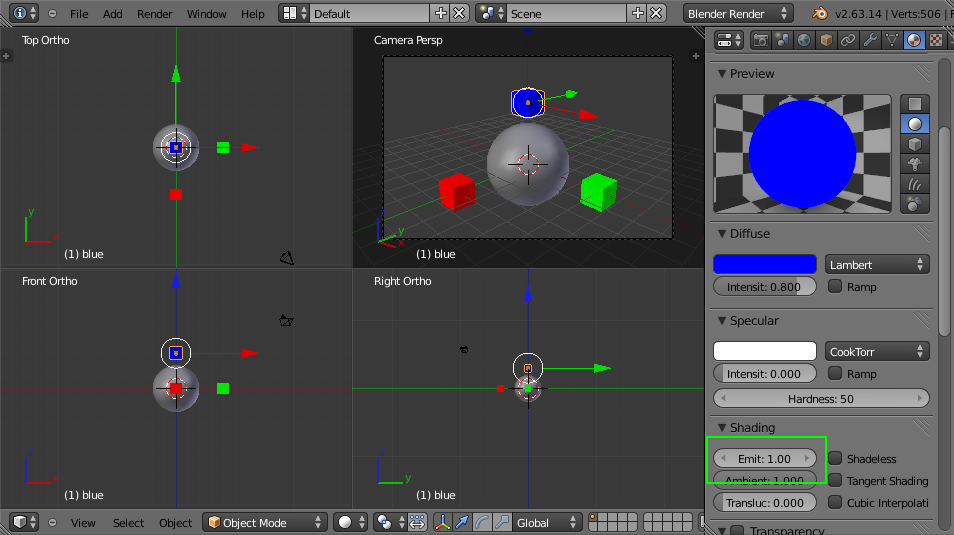

The first step is to mark each light object as "emissive". An object with

an emissive level of 0.5 will always be

drawn at at least 0.5 brightness, regardless

of any shadows or other lack of light. This is usually how, for example, luminous

tube lights and neon signs are modelled. In Blender, the emissive

level is part of an object's material. In OpenGL, the emissive level can

be stored per object or per vertex. For each of the small "light" cubes,

an emissive material is created:

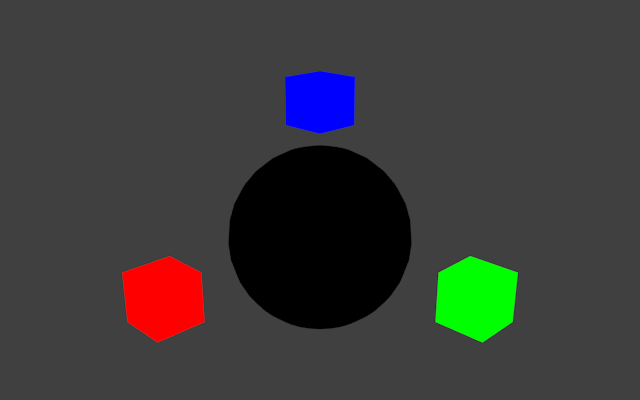

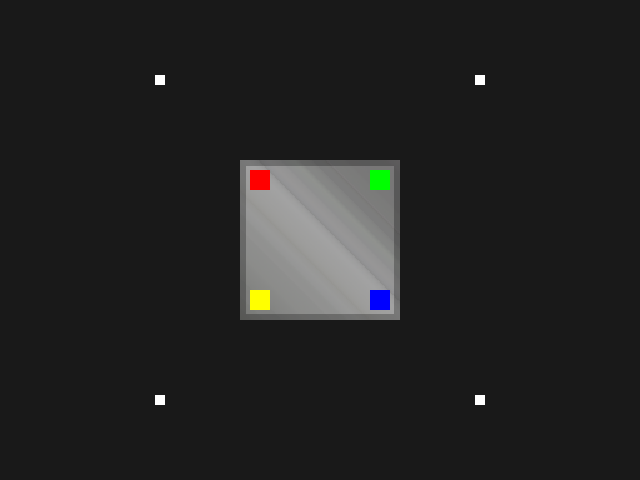

Rendering the scene demonstrates how the objects are effectively unaffected

by the lack of light. Note that emissive textures do not actually emit light -

they simply control how the object is shaded.

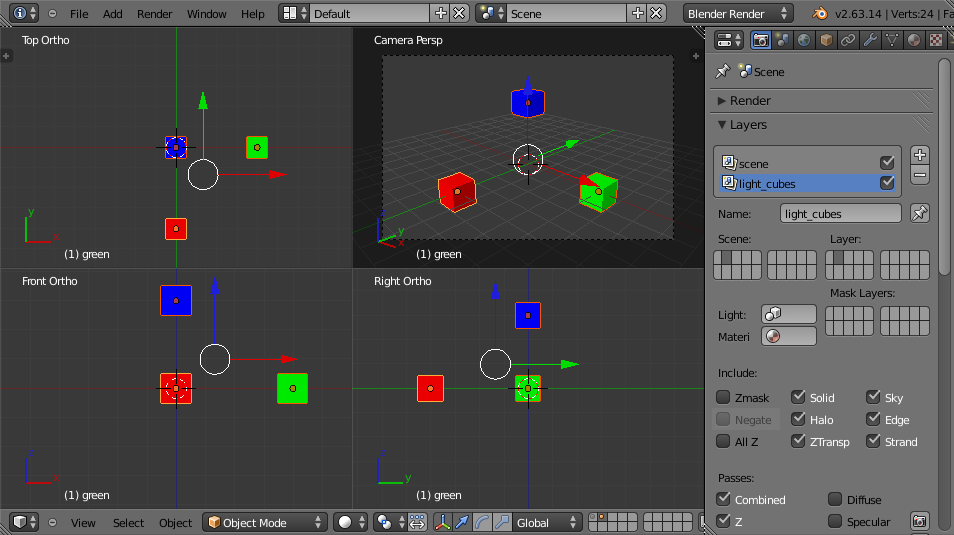

Next, it's necessary to light the scene with real lights. Because the aim

is to model small cubes that emit light, ideally the light sources should be

placed inside the cubes. The obvious problem with this approach is that the

cubes will not allow light to escape from inside of them. The simplest solution

to this problem is to place the small light cubes onto a different layer, and

configure the individual lights to only affect objects on their own layer. Two

layers are created, and the cubes are distributed between them:

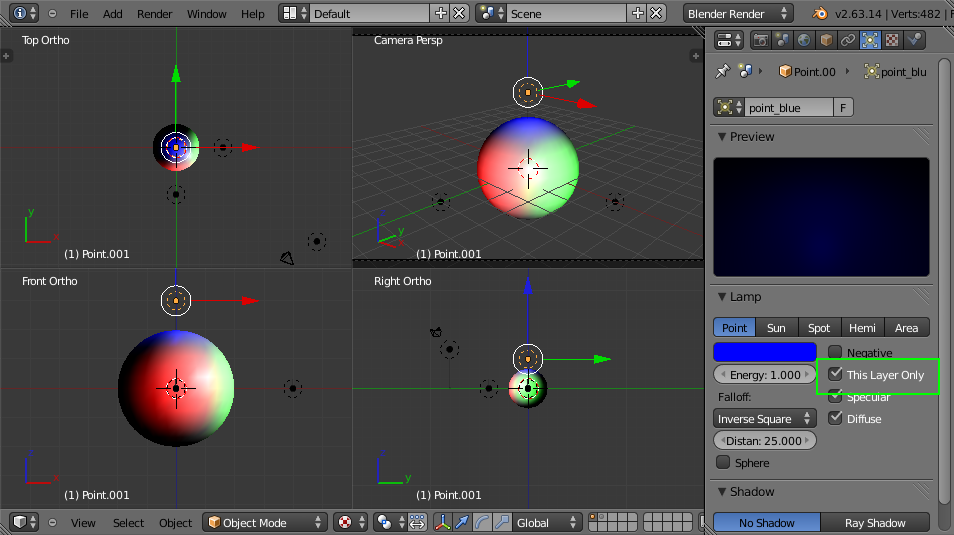

Next, point lights are created on the same layer as the sphere, and each

individual light is configured to affect only that layer:

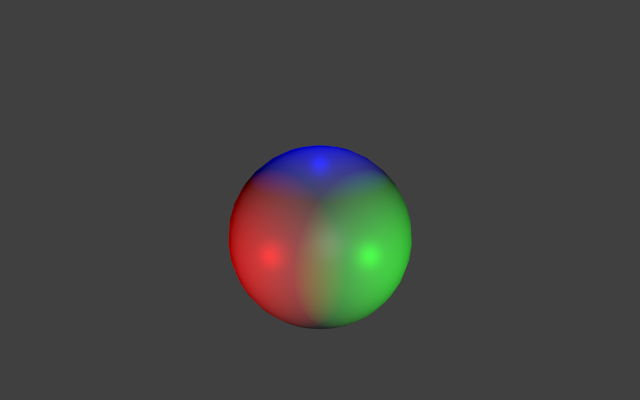

Rendering the scene (with the layer containing the light cubes disabled) shows

the effect of the point lights:

The overview of the current scene:

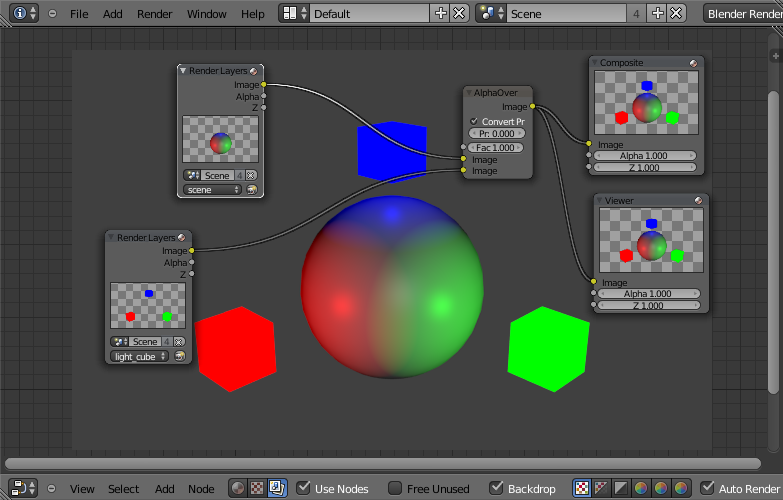

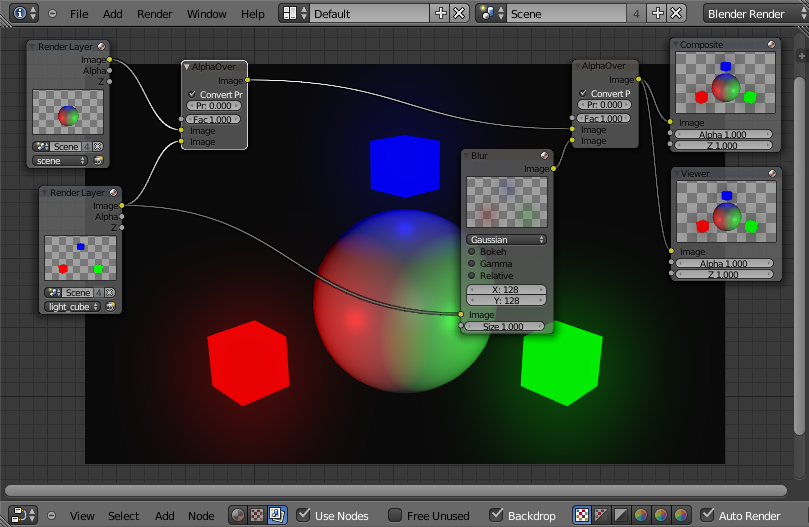

Now that the scene is split into layers, it's necessary to use Blender's

compositing pipeline the combine the layers into the final image. Switching

to the Node Editor and combining the two

images using simple alpha compositing gives the expected result:

Glow mapping

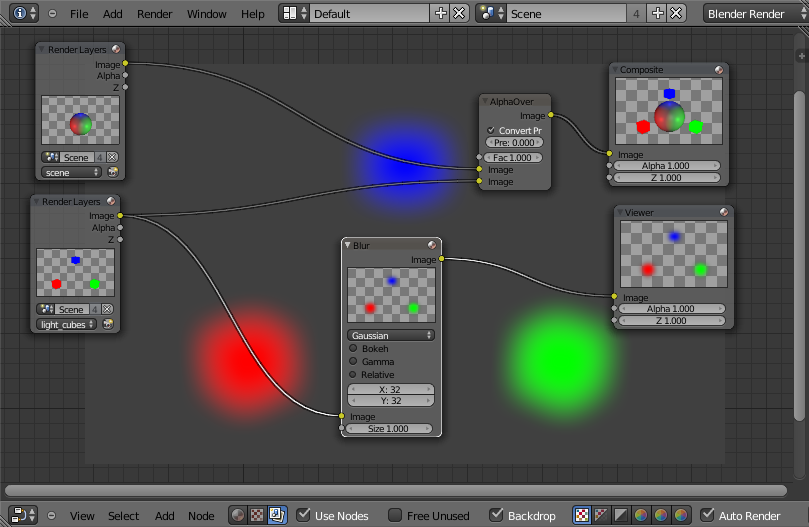

A glow map can be thought of as a blurred

copy of the light sources in the scene. Producing the map is trivial and

simply involves passing the layer containing the light cubes through a

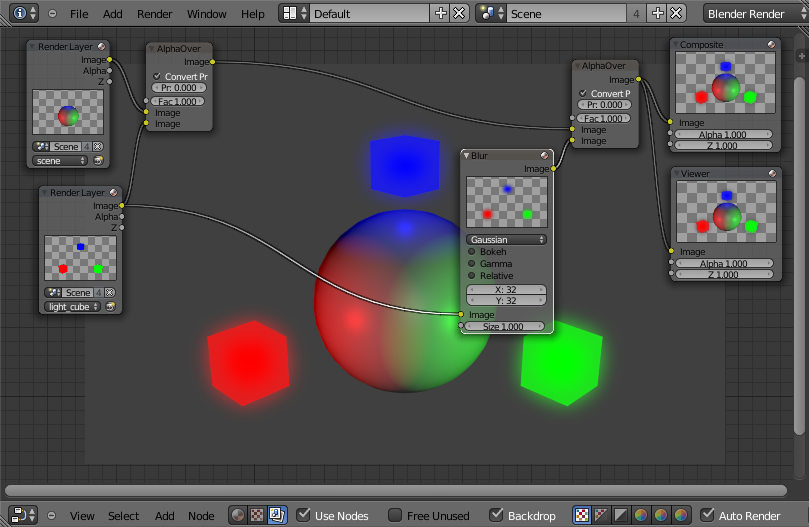

gaussian blur filter:

The blurred layer is then composed with the original image:

Note that a darker background makes the effect more prominent:

A larger blur radius gives the effect of a dustier atmosphere:

See the completed Blender project file

for the implementation described here.

Alternate implementation

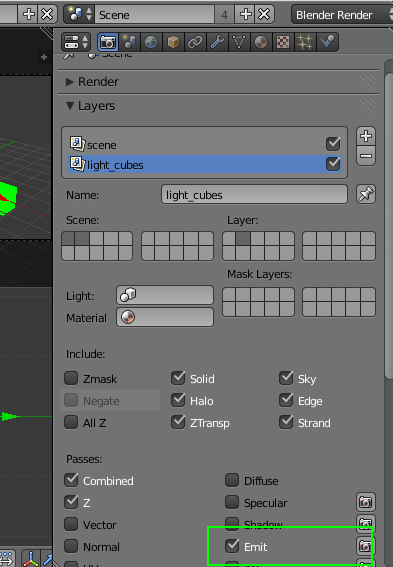

It's also possible to avoid the use of multiple layers by asking Blender

separate out the emissive parts of the image. The emissive sections are

then processed as before. Emissive data can be added to the rendered image

by selecting the 'Emit' option in the layer settings:

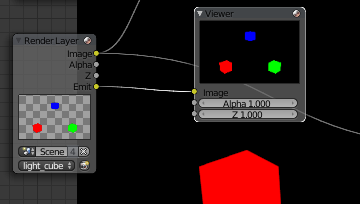

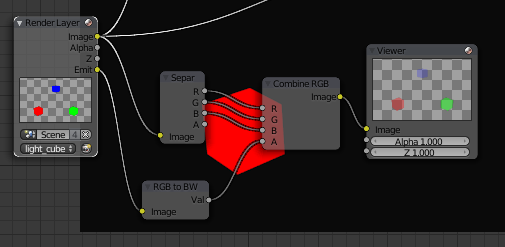

The emissive data then becomes available as a channel in the relevant

render layer:

The downside of this approach is that the emissive data has no alpha

channel. It's necessary to synthesize an alpha channel using a greyscale

copy of the data:

OpenGL implementation

Preamble

The OpenGL implementation is written in Java and OpenGL, using

LWJGL

for the underlying OpenGL bindings. It does not use any Java-specific or LWJGL-specific

features and should be directly translatable to any language with OpenGL access.

The program uses the deprecated immediate mode for rendering solely because

to do otherwise would require more in the way of supporting code.

The program consists of two classes: GlowMap.java

and Utilities.java.

Implementation

Obviously, in OpenGL, there is no such thing as a "separate layer". However,

it's easy to get the required separation by rendering in multiple passes using

framebuffer objects. The general approach is as follows:

- Render the lit scene, including emissive objects, to a texture T0.

- Render only emissive objects to a texture T1.

- Blur the texture T1 with a GLSL shader, writing the result to a texture T2.

- Render a screen-aligned quad, textured with texture T0.

- Render a screen-aligned quad, textured with texture T2, possibly with a lower level of opacity depending on the desired effect.

Given that the typical gaussian blur shader requires two rendering passes,

the entire procedure requires five rather inexpensive passes and is therefore

easily achievable in real-time on modern graphics hardware. For simplicity,

the technique here is shown in 2D but the process is exactly the same for

three-dimensional objects.

The procedure for rendering is therefore:

- Load the necessary shading programs.

- Create a framebuffer, Fs, in which to hold the scene.

- Create a framebuffer, FbH, in which to hold the horizontal pass of the blur shader.

- Create a framebuffer, FbV, in which to hold the vertical pass of the blur shader.

- Switch to framebuffer Fs and render only the emissive objects in the scene.

- Switch to framebuffer FbH and render a full-screen, screen-aligned rectangle textured using the texture that backs framebuffer Fs, and using the horizontal pass of the two-pass gaussian blur shader.

- Switch to framebuffer FbV and render a full-screen, screen-aligned rectangle textured using the texture that backs framebuffer FbH, and using the vertical pass of the two-pass gaussian blur shader.

- Switch to the default framebuffer (so that rendering will be visible on the screen) and render everything in the scene.

- Enable normal alpha compositing with glBlendFunc.

- With the default framebuffer still active, render a full-screen, screen-aligned rectangle textured using the texture that backs framebuffer FbV.

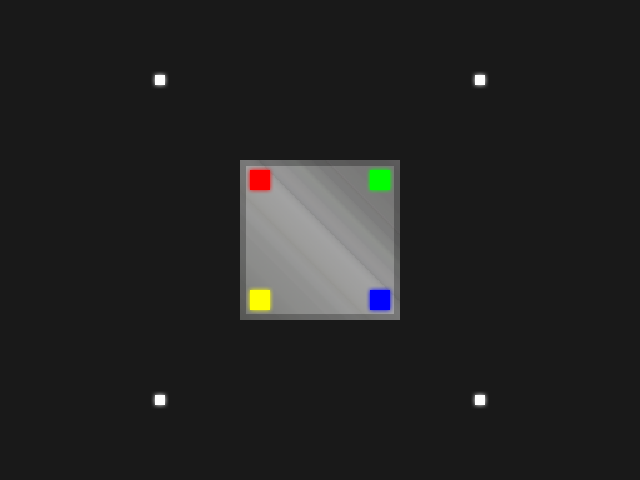

The provided demonstration program, GlowMap.java,

is able to show each rendering pass (although the two passes of the gaussian blur shader are combined into one).

Use the 'R' key on the keyboard to switch between rendering passes.